For more information about the full results for both FP16 and INT8, see the Accelerating Sparse Deep Neural Networks whitepaper. Table 2 has a sample of FP16 accuracy results that we obtained using this workflow implemented in the PyTorch Library Automatic SParsity (ASP). Across a wide range of networks, it generates a sparse model that maintains the accuracy of the dense network from Step 1.

#ANSWERWORKS RUNTIME SHOULD I REMOVE IT UPDATE#

Step 3 recovers that accuracy with enough weight update steps to let the weights converge and a high enough learning rate to let the weights move around sufficiently.

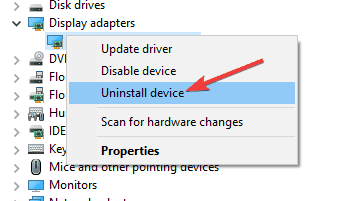

We prefer to prune values that are already close to zero.Īs you might expect, suddenly turning half of the weights in a network to zero can affect the network’s accuracy. Which weights should stay, and which should be forced to zero? We’ve found that a simple answer works well: weight magnitude. There are many ways to make pruning decisions. After the pruning stage, the sparsity pattern is fixed. This workflow uses one-shot pruning in Step 2. Repeat the original training procedure.Out of every four elements, remove just two. On the dense network, prune the weights to satisfy the 2:4 structured sparsity criteria.The goal is to start with a known-good model whose weights have converged to give useful results. We’ve developed a simple training workflow that can easily generate a 2:4 structured sparse network matching the accuracy of the dense network: Of course, performance is pointless without good accuracy. 2:4 structured sparse networks maintain accuracy Performance of Sparse Tensor Cores in the NVIDIA Ampere Architecture. Table 1 shows details on the wide variety of data types supported by Sparse Tensor Cores. So, for a sparsity of 2x, they can complete the same effective calculation in half the time. They use the metadata that is stored with the nonzeros to pull only the necessary values from the other, uncompressed operand. Sparse Tensor Cores accelerate this format by operating only on the nonzero values in the compressed matrix. A 2:4 structured sparse matrix W, and its compressed representation Such a regular pattern is easy to compress and has a low metadata overhead (Figure 1).įigure 1. There are no vector or block structures pruned together. This naturally leads to a sparsity of 50%, which is fine-grained. In each contiguous block of four values, two values must be zero. Sparse Tensor Cores accelerate a 2:4 sparsity pattern. The NVIDIA A100 GPU adds support for fine-grained structured sparsity to its Tensor Cores. Sparse Tensor Cores accelerate 2:4 fine-grained structured sparsity Using a simple training workflow and deploying with TensorRT 8.0, Sparse Tensor Cores can eliminate unnecessary calculations in neural networks, resulting in over 30% performance/watt gain compared to dense networks. TensorRT is an SDK for high-performance deep learning inference, which includes an optimizer and runtime that minimizes latency and maximizes throughput in production. Today, NVIDIA is releasing TensorRT version 8.0, which introduces support for the Sparse Tensor Cores available on the NVIDIA Ampere Architecture GPUs. In this post, we discuss how the NVIDIA Ampere Architecture addresses these challenges.

It may not work due to differences in the network, task, optimizer, or any hyperparameter. The trouble comes when you try to apply Sparsity X to network B.

It has been shown that network A can achieve Sparsity X. Workflow-Much of the current research in network pruning serves as useful existence proofs.This limits the potential performance benefit. Alternate pruning methods that attempt to make acceleration easier, such as coarse-grained pruning that removes blocks of weights, channels, or entire layers, can run into accuracy trouble even sooner. Accuracy-To achieve a useful speedup with fine-grained, unstructured sparsity, the network must be made sparse, which often causes accuracy loss.Standard sparse formats are inefficient for all but high sparsities. Acceleration-Fine-grained, unstructured, weight sparsity lacks structure and cannot use the vector and matrix instructions available in efficient hardware to accelerate common network operations.There have long been three challenges to realizing the promised gains. The benefits of sparsity only seem straightforward. If there are zeros in the network, then you don’t need to store or operate on them. Sparsity is one optimization technique that holds the promise of meeting these goals. A more efficient network can make better predictions in a limited time budget, react more quickly to unexpected input, or fit into constrained deployment environments. When deploying a neural network, it’s useful to think about how the network could be made to run faster or take less space. This post was updated Jto reflect NVIDIA TensorRT 8.0 updates.